Quick Chat

with you!

You have our ear and we can't wait to hear about your idea! Share your details here and we will make sure to schedule a coffee date with you soon.

Are you fed up with user-generated content running amok? The current real-time entertainment environment makes real-time content moderation a necessary condition, in particular where platforms grow fast. According to reports on Australian apps, over 72 percent of users tend to remain on applications where they feel safe and respected. The best companies for AI technology integration can help you build a reliable content moderation system.

That is where an entertainment app development company in Australia comes in, and more so when coupled with enhanced artificial intelligence software development. As the consumption of content in Australia continues to increase on streaming, game, and social entertainment platforms, both communities must be clean and compliant with the brand to retain them and build brand trust.

Today in the blog, we will learn how real-time AI technology moderation can be implemented, why it is essential in Australian platforms, and how an Austin-based entertainment app development company in Australia can handle it when it has significant artificial intelligence software development at its disposal.

With the rise of digital entertainment needs in Australia, decision-makers, streaming startups, and game developers must consider user safety. This read is right up your alley, provided that you are looking at scalable content solutions. Under the sponsorship of an Australian entertainment app development company, developers have found ways to incorporate artificial intelligence software into moderating real-time chats without any hassle.

Regardless of whether you are deploying a new artificial intelligence app or improving an existing platform, the information presented on this page about how an entertainment app development company in Australia can use artificial intelligence software development to moderate a platform can aid your project to make it safer, more resourceful, and more engaging.

As the entertainment industry continues to expand digitally, user-generated content is overwhelming video streaming applications, social networking sites, and games. The biggest challenge of this data explosion relates to the ability to have a safe, encompassing, and regulatory-suitable space in real-time. Artificial general intelligence (AI)-based content moderation is already critical to fulfilling this need.

With increased user engagement, the moderation needs to be smart. An Australian Android app development company is currently incorporating the use of AI in content pipelines to impose the structure and develop loyalty in users.

The digital environment of Australia is very regulated and multicultural. Something that can be acceptable in other regions may be banned here. An example is the eSafety Commissioner, which has laws on cyberbullying, explicit content, and online safety, especially towards children.

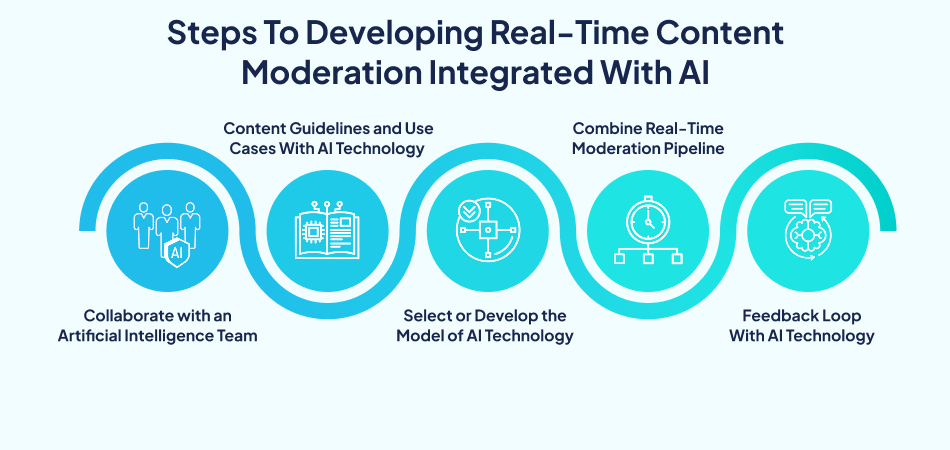

Understand how to create AI-based solutions that identify and filter out bad or offensive content as it happens and guarantee secure, real-time communications between users.

The adoption is quick and economical since many companies that develop mobile applications in Australia have already begun offering AI moderation tools and blockchain technology as the standard part of app design packages.

If you are developing an application for children, your UI&UX developers should prioritize detection algorithms for explicit visual media and toxic words, minimizing false positives.

Professional UI&UX developers in Australia will assist you in striking a commendable balance between speed and accuracy in the selection of the best model architecture.

This includes

The integration should be low-latency, with moderation not hindering the user experience. The backend systems must be made concurrent and quick to respond.

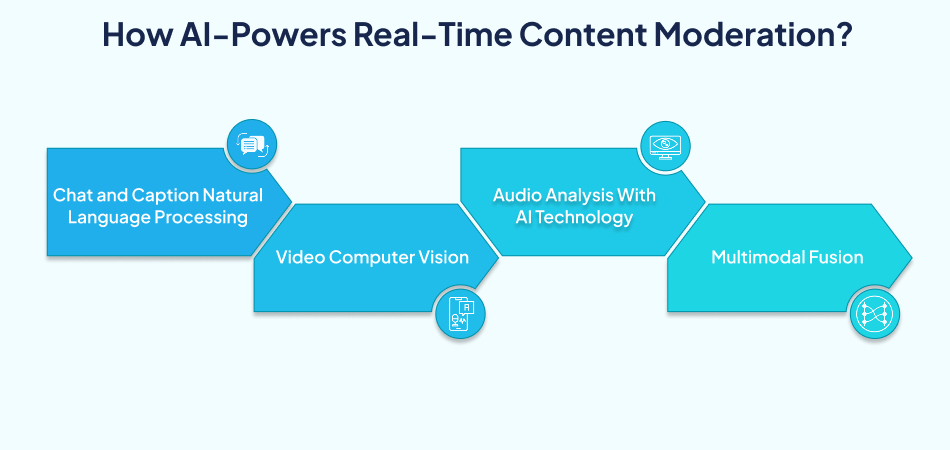

Most current AI applications incorporate ML, NLP, and computer vision to detect and even act on dangerous material with zero delay.

The AI models review in real-time chat, comments, and audio transcriptions to identify:

In the case of visual content, AI scales computer vision to:

These are frame-by-frame analyzing tools, which can be live or low-latency. They are also able to detect visual copyright violations through the matching of video frames with the licensed content.

Even sound is not an exception. AI models have the potential to detect:

Audio AI may be used to transcribe and analyze voice, almost in real time, even across language groups.

AI-based content moderation would make services safer, more efficient, and compliant, and transform user experiences as risks are reduced and operations expand worldwide.

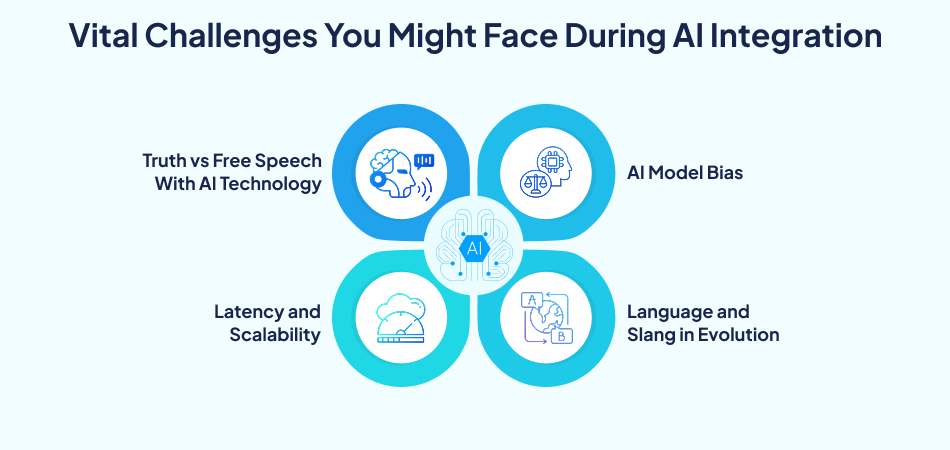

Real-time AI moderation comes with severe drawbacks and prevents substantial ethics:

Despite these difficulties, the future of artificial general intelligence-aided moderation seems bright, fruitful, and more subtle.

AI is not going to substitute human moderators but only add to them. Human beings review journal systems in which AI technology edge cases management scales are emerging as standards.

AI models in the next generation will be more sensitive to nuance, cultural context, and emotion, and will have lower false positives and false negatives.

As services spread to more parts of the globe, AI moderation will advance to support a wide range of languages, dialects, and other cultures without discrimination.

Additional sites will enable making adjustments to moderation options, such as filtering salty language or turning the language sensitivity slider.

StreamSafe, which is an Australian live-streaming network

There is an increase in complaints raised by users under the offensive content on live chat

Collaborated with an Android app development company in Australia to use an AI-powered real-time content moderation

More efficient, secure, and scalable moderation with little human intervention.

However, the AI application of real-time content moderation is evolving in the user safety and compliance matters of entertainment platforms. Regardless of whether you invest in the development of Android apps or collaborate with iOS app developers from a well-known cross-platform app development company, AI moderation integration has become an essential feature, not a luxury. When businesses prioritize responsible content management, they gain significant trust and learn to scale intelligently.

There are options to develop Android apps and also a complex backend moderation, which is guaranteed to work smoothly through working with skilled iOS app developers from a professional progressive web app development company. Are you willing to build a safer entertainment app? Don’t worry about the AI helping the platform grow to the next level; talk to our experts today.

Q 1. Why should an entertainment app possess AI content moderation?

Ans 1- Maintaining user security and policing platforms for inappropriate content are key requirements for regaining compliance with legal institutions.

Q 2. Can an entertainment mobile app development company put in AI Moderation tools?

Ans 2- Yes, a metaverse app development company can integrate real-time AI moderation features seamlessly.

Q 3. How does AI software development bring greater accuracy to the moderation system?

Ans 3- Artificial intelligence software development employs machine learning to detect more subtle violations.

Q 4. Is AI moderation affordable for startup companies?

Ans 4- It is scalable and cost-effective, provided that a good cross platform app development company and an artificial intelligence software development partner are hired.